Whatever your opinion on the iPhone 4, it’s hard to disagree that the Retina display is really rather excellent. Phrases like “It looks like print” are cliched, and entirely true. It’s the single biggest reason I’m not buying an iPad this year; no point buying one now, when I suspect one with a Retina-like display will be released next year.

The thing I find most fascinating about it is that it reminds me of when colour depth ceased to be a big deal to most people in terms of specifications. (At its extremes in the early days of home computing, to get the highest resolution on a BBC Micro, 640 x 256, you could only display two colours at once.) As soon as technology advanced enough so that 24-bit, 16.7 million colours in high resolution became standard, the general consumer stopped caring, as that’s the most granularity the eye can make out. Exactly the same is now happening with pixel density on mobile devices; we are approaching – or at, depending on your point of view – the point where it’s impossible for the eye to make out individual pixels, and there’s little point going much further. And whilst Apple may have got there first, this kind of display will surely become standard across all high-end phones over the next couple of years.

With this, however, comes its own set of problems. The user interface of the iPhone was carefully designed for the finger; a reason why Mac OS X and your common-or-garden desktop variety of Windows will never work that well on touchscreen devices. Render each pixel the same way on an iPhone 4 as on previous models, and unless you cut off three quarters of your finger lengthways and poke at the device with a bloody stump, you’d have problems; to say nothing of the squinting. Everything would be rendered four times smaller.

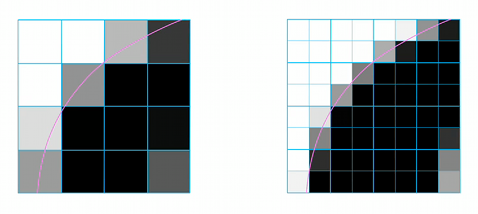

The answer was resolution independence, and with the iPhone, it was easy. The screen on an iPhone 4 has exactly four times the amount of pixels as older iPhones; 640 x 960, compared to 320 x 480. Where one pixel was rendered before, you instead render four. The result: everything is rendered the same size as before – icons, text, the lot – but far more sharply. Everybody’s happy. (Of course, Android also has resolution independence; indeed, with the greater variety of handsets with different specifications, it’s a necessity. But there’s a specific reason why I’m approaching this from an iPhone point of view, as will become apparent.)

So far, so bloody obvious. However, it’s important to note that what seems a natural thing to do with mobile devices isn’t traditionally the done thing with operating systems. For instance, my 17″ Macbook Pro runs at a maximum resolution of 1920 x 1200. And yet, apart from watching HD video, I never actually use it in this resolution – instead, I bump it down to 1680 x 1050. Why? Because at the maximum resolution, the user interface is simply too small to be comfortable for me. Usable, yes, but not comfortable. Unlike the iPhone, one pixel in the UI means one pixel, and everything shrinks or expands accordingly.

This is irritating enough now. But what of the future? The standard measurement for pixel density is ppi – that’s pixels per inch. The aforementioned 17″ Macbook Pro, at its maximum resolution, has a ppi of 132. The iPhone 4 has a ppi of 326 – two and a half times greater. Now we have these amazing devices that live in our pockets with such great screens, how much longer will people tolerate a lower pixel density on their main computer displays? Doesn’t it feel odd to have sharper displays on our phones than at our main workstations?

Of course, 30″ monitors aren’t suddenly going to develop 326ppi displays overnight; the ramifications for graphics cards alone are “difficult”, let alone physically making the display. It’s much easier to create smaller displays with a massive pixel density than larger ones. But it’s not difficult to imagine screens – especially smaller laptop screens – vastly increasing in pixel density in the forthcoming years; and then full resolution independence, with UI elements rendered at a size independent of pixel density, will become a nothing other than a necessity. A 13″ laptop screen, with the iPhone 4’s pixel density? Yes bloody please.

Murmurings of full resolution independence for Mac OS X have been around for many years; some backend support was built into OS X Tiger in 2005. It was developed further in Leopard, and I expected the feature to be fully completed with the current version of OS X, Snow Leopard, in 2009; with that release being dedicated to tidying up the OS, it seemed like the ideal time to implement it. Sadly not. The work remains half-done, not visible to the end user, unless you fiddle with the developer tools.

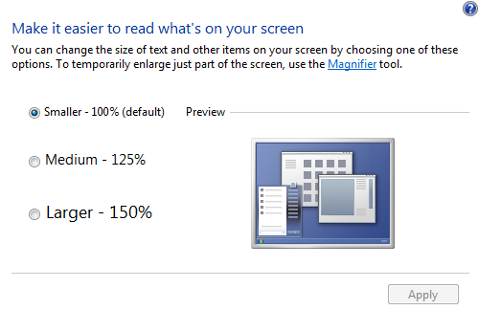

Meanwhile… Windows 7? Pop into Control Panel, click “Appearance and Personalization”, then “Make text and other items larger or smaller”, and there you have it – you can select whether to view things at 100%, 125%, or 150%, regardless of screen resolution. I haven’t played with it much, and I know there are issues with programs that aren’t designed to handle it; the implementation is not perfect. Still: it’s there. (If any Windows people want to interject with how well the feature works in practice, feel more than free.)

For those of you worried about where this is leading, despite appearances, I’m generally not one of those people who complain that Apple has slowed Mac OS X development for the sake of iOS devices. As much as anything else, Mac OS X is a relatively mature operating system; there’s very little I ache for in the current version. But resolution independence is now getting to the point where it’s becoming important. The problem is far more difficult than with the iPhone; you need to cope with multiple resolutions, and multiple displays, with multiple ppi values. It’s not just a case of doubling up the pixels, and the problems with it are presumably why it remains half-finished. (And with Apple, with their emphasis on the user interface, any implementation would need to be absolutely perfect.) But when the announcements for OS X 10.7 start appearing, I’ll be very disappointed if it isn’t included. Especially with Windows pushing forward with the feature. Without it, it will actively start to hold back future hardware developments – hardware developments that the iPhone 4 is paving the way for.

And I’d like to finally be able to use my laptop at the resolution I bloody paid for, please.

One comment

All The Buttons on 23 September 2010 @ 10am

I know what you mean about the iPad – suddenly other displays feel disappointing after the iPhone 4, and it’s particularly noticeable on a handheld device. But I’d be surprised if a Retina Display comes for the iPad any time soon. For one thing, there’s the obvious technical barrier (although a double-resolution display, while not quite ‘retina’, might be within the realms of possibility). The biggest barrier, though, would be Apple’s modus operandi. The chances are that subsequent generations of iPads will see accelerating sales just as the iPhone has, which will leave the first gen devices in the minority. I cannot see Apple tolerating that sort of fragmentation where a minority of early devices have a different display configuration from everything that comes later. I reckon the next update will be all about adding a camera and making it lighter. A double resolution display might come a year after that.

Comments on this post are now closed.